The prevalence of laser diffraction technology for routine particle size analysis across a diverse range of industrial sectors is attributable to two key factors: the widespread need for particle size data and the ease of use of this technology. Here we consider why so many manufacturers need to measure particle size and why laser diffraction is so often the method of choice.

The penetration of an analytical technique into the industrial arena, and the extent to which it makes the transition from expert assignment to routine task, is a function both of the value of the information it provides and the ease with which data can be acquired. For manufacturers of solid products, particle size is often a critical parameter where final product performance is linked to particle size and/or size distribution. For solids processors this makes particle size information practically a universal requirement.

Laser diffraction presents a robust solution for particle size measurement across a wide variety of applications, and in many industrial sectors it is now the technique of choice. Over the last decade or so laser diffraction analyzers have become more flexible, easier to use, and highly automated. Many industrial users now rely heavily on such systems not only in the laboratory for development and QC, but also for routine monitoring at both pilot- and full-scale production. Off-line measurement can now be as simple as loading the sample and pushing a button while on-line systems measure in real-time, at rates fast enough to track even rapidly changing processes.

A good starting point from which to develop an understanding of the continuing appeal of laser diffraction is to examine why the data are required. This sets the brief to which laser diffraction delivers, and must continue to deliver.

Looking across the applications of laser diffraction in manufacturing environments, it is clear that different industries measure particle size for essentially the same reasons. Looking at the rather dissimilar areas of fuel atomization and cement manufacture, both have requirements to control particle size in order to manipulate the rate of chemical reactions that occur when their products are used - combustion and hydration respectively. So it is illuminating to review some of the most important properties influenced, or in some cases controlled, by particle size.

For solids, the rate at which a chemical reaction occurs is often a function of the specific surface area of the particles involved, the amount of surface area per unit mass. If specific surface area is increased then, generally speaking, mass transfer barriers to reaction decrease. Or to put it more simply, the finer the particle population the easier it is for reactants to reach and react with a particle. Catalyst manufacturers share with cement producers this requirement to tailor particle size in order to deliver the desired reaction rate.

The influence of particle size on the rate of dissolution closely mirrors its impact on reaction rate. Increasing specific surface area, by reducing particle size, decreases the physical impediments to dissolution, thereby accelerating the process. A finer particle population dissolves more quickly, all other factors being constant.

This correlation with particle size is a particularly important one for the pharmaceutical industry, since the rate of dissolution of an active ingredient in vivo affects bioavailability. Likewise agrochemical and detergent manufacturers must measure and manage particle size in order to control the dissolution and release rates of active components within a formulation.

The way in which particles pack together is a function of both particle size and distribution. Larger particles pack less efficiently than smaller ones, leaving larger void spaces. For monodisperse materials, decreasing the particle size will therefore improve packing density and reduce voidage. A broader particle size distribution allows smaller particles to pack into the spaces between larger ones, enhancing packing efficiency.

Controlling particle packing is crucial for the successful production of ceramic and metal components via mould filling processes. Achieving an efficient fill with little voidage ensures a strong, flaw-free product, post melting/sintering. Powder coating manufacturers manipulate size and distribution to achieve similar goals. Optimally sized, closely-packed particles melt efficiently at lower temperatures, leaving more time for cross-linking reactions between the polymeric particles during the melting process. The result is a better quality surface finish.

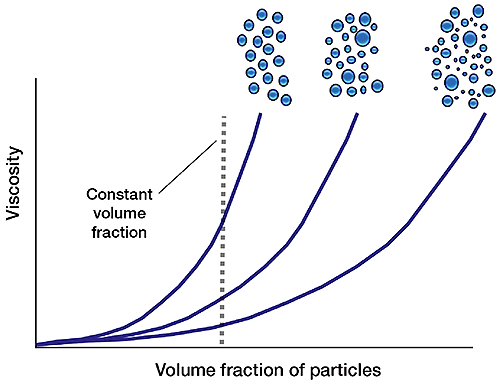

In suspensions, particle packing influences rheological behaviour, principally viscosity. For a given volume fraction of suspended solids, increasing dispersivity (the breadth of the particle size distribution) reduces viscosity. Because a mixture of large and small particles packs more efficiently, the solids present have less of an impact on viscosity. This effect can be exploited to increase the solids loading of a product, a paint, or ceramic suspension, for example, without compromising viscosity (see 'Spotlight on ceramics').

With respect to a suspension, stability means the avoidance of sedimentation. A stable emulsion on the other hand is one in which the droplets remain discrete rather than coalescing to form a continuous immiscible phase. The size of particles/droplets is influential in both cases.

Suspension stability relies on balancing the gravitational pull exerted on the particles, a function of particle size and density, against the up-thrust of the suspending fluid, which depends on viscosity. This is a routine exercise in the formulation of medicines, where instability can lead to inconsistent dosing, and in the food industry where settling can compromise customer perception of a product.

In emulsions, larger droplets have a greater tendency to cream and coalesce, but producing smaller droplets is more energy intensive, due to the increase in surface area which occurs as a result of homogenization. Particle size analysis is therefore used to assess the likelihood of creaming, as well as to monitor stability to flocculation and coalescence over time. The droplet size and degree of flocculation within an emulsion may also impact the performance characteristics of the product - the mouth feel of a food or the viscosity of a cream liqueur for example. Particle size measurement is therefore used routinely when optimizing an emulsion's characteristics.

Human respiratory systems very successfully filter out particles above a certain size to secure the integrity of our air supply and prevent irritation to the lungs. This classification process gives rise to two distinct reasons for measuring particle size in connection with ease of inhalation: to prevent a product from being inhaled; or to ensure successful deposition of a drug within the pulmonary region.

For all orally inhaled and nasal drug products (OINDPs), particle size is a critical parameter with clear size ranges recommended for deposition and retention in the nasal cavity and for penetration to different areas of the lung. On the other hand, manufacturers of, for example, cleaning products and hairsprays, control fines to prevent inhalation. Particle size analysis is therefore key to safety testing, for instance as part of a REACH study.

The way in which a particle scatters light depends upon its size. This scientific phenomenon underpins the technique of laser diffraction and is exploited by paint, coating and pigment manufacturers to achieve desirable product performance. The size of particles in a surface coating affects performance-defining parameters such as hue and tint strength; hiding/transparency - the coverage of the product; and gloss.

With consumer products, food being the prime example, particle size can influence our enjoyment of a product or our perception of its quality, both of which are valuable commodities. The particle size of coffee, the extent to which it is ground, impacts the flavour released during brewing while a fine particle size in chocolate imparts a smooth mouth feel that is superior to a grainy finish.

Ceramic powders are routinely processed to form solid components of precise size and shape. Typically a cast of the component is first formed by either filling or coating a mould with a ceramic suspension. Drying produces a 'green body', which is then fired to sinter the ceramic particles and impart properties such as heat resistance and mechanical strength.

The particle size and distribution of ceramic powders is critical since it influences the:

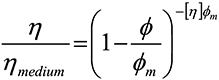

The influence of particle size on suspension rheology is of general interest beyond this sector. The Kreiger-Doherty equation describes the correlation between suspension rheology and particle size distribution at low shear rates:

|

where η is the viscosity of the suspension as a whole, ηmedium is the viscosity of the base liquid, φ is the volume fraction of solids in the suspension, φm is the maximum packing faction of solids in the suspension and [η] is the intrinsic viscosity (2.5 for rigid spheres).

This equation predicts that for a fixed volume fraction, suspension viscosity will decrease with increasing packing fraction. Since increasing the polydispersivity of a sample increases packing fraction, (see 'packing density'), controlling particle size distribution is therefore one way to manipulate rheology to meet the requirements of the process.

|

Many foods contain oil/water emulsions, or are emulsified at some point during their manufacture. Within the dairy industry, milk is a good example of naturally occurring emulsion, while ice-cream and cream liqueurs are just two of many processed products for which emulsification is an important manufacturing step.

The bulk properties of emulsified food depend on their colloidal characteristics, the size of fat droplets present in dairy products influencing important parameters such as:

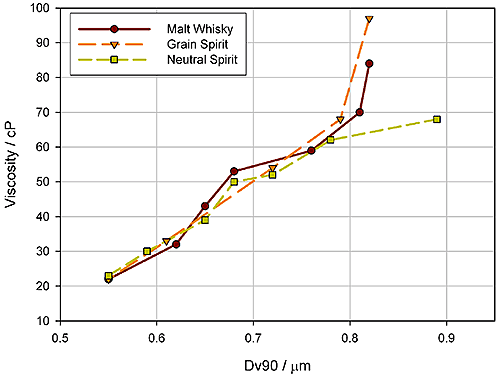

The figure below shows data relating to emulsion stability for a cream liqueur. Often, emulsions such as cream liqueurs are found to increase in viscosity and even gel during prolonged storage*. These data show that increases in viscosity measured over time correlate with increases in the Dv90 of the emulsion. The emulsion is not stable, but rather, over time, is forming a flocculated droplet network that increases the viscosity of the product. Here, then particle size measurement enables the assessment of different strategies for improving the long term stability of the product.

|

* Muir, D.D., McCrae-Homsma, C.H. & Sweetsur, A.W.M., "Characterization of dairy emulsions by forward lobe laser light scattering - application to cream liqueurs", Milchwissenschaft (1991) 46: 691-694

The preceding exploration of the links between particle size and performance is far from exhaustive, and not all of the requirements for particle size data that we have highlighted are met by laser diffraction alone. Surveying the applications does however serve to emphasize some key attributes required of a particle sizing technique, which are fulfilled by laser diffraction:

Laser diffraction is an ensemble particle sizing technique, which means it generates a result for the whole sample rather than building up a size distribution from measurements of individual particles. A sample passing through a collimated laser beam scatters light over a range of angles. Large particles generate a high scattering intensity at relatively narrow angles to the incident beam, while smaller particles produce a lower intensity signal but at much wider angles. Laser diffraction analyzers record the angular dependence of the intensity of light scattered by a sample, using an array of detectors. The range of angles over which measurements are made directly relates to the particle size range which can be measured in a single measurement.

The particle size distribution of the sample is calculated from the detected scattering data using an appropriate theory of light behaviour. The latest version of ISO13320 [1] (the ISO standard for laser diffraction) recommends the use of Mie theory for all particles in the size range over which laser diffraction is applied, which is 0.1 to 3000 microns.

The non-destructive nature of laser diffraction is an inherent advantage of the technique. Furthermore, as the above analysis makes clear, particle size measurement by laser diffraction relies on the laws of light behaviour, eliminating the need for instrument calibration. The measurement range over which the method is applicable is intrinsically suitable for many manufacturing applications and the fact that the technique is an ensemble one means that measurement times should be short.

Over and above these attractions, however, the dominance of laser diffraction has been driven by instrument manufacturers harnessing the technique's intrinsic advantages in reliable easy-to-use systems. Developments over the last ten to fifteen years have been pivotal.

Although ISO13320 states 0.1 to 3000 microns as the overall size range over which laser diffraction can be applied, the practical realization of this capability has required significant advances in optical hardware. In early laser diffraction systems, measurement over a more limited range than this could only be achieved by using different lenses to capture and focus the scattered light onto the laser diffraction detector array. The switching of lenses, associated as it is with re-alignment of the instrument, limits flexibility and/or productivity. It is especially disadvantageous when analyzing samples with a very broad particle size distribution or when studying the progressive reduction of particle size, for example during milling. Improvements in optical design have largely eliminated the need for multi-lens systems although they remain in use; their functionality is less of an issue for those routinely measuring closely similar samples, within in a well-defined size range.

In assessing the progress towards improved optics it is important to recognize that attaining the widest possible measurement range is not the only goal. Resolution is equally critical. In simple terms, measuring a precise particle size distribution relies not only on detecting particles at either extreme of the distribution, but also accurately resolving the population into size fractions. Even today laser diffraction analyzers vary considerably in their ability to reliably quantify the amount of sample present in each size fraction across the quoted measurement range of the instrument. Poor resolving capability compromises the generation of a reliable particle size distribution and the ability to quantify, for example, the amount of fines or coarse particles present in a sample, which is often the purpose of the measurement.

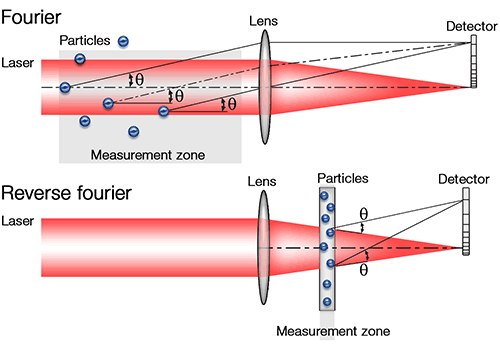

ISO13320 provides a useful summary analysis of the relative merits of the two optical set-ups that now dominate commercial laser diffraction system design. The 'classic' forward Fourier optical set-up, extremely common in instruments developed during the 1980s, has the data collection lens positioned after the measurement zone (see figure 3). This has the advantage of offering a wide working range (this is the maximum distance between the particles and the lens) and is therefore especially suitable for spray measurement, where the particles may be distributed across a wide path length. In this set-up, the lens can be changed to focus scattering from specific angular ranges onto the detector array, thus enabling the measurement of different particle size ranges. However, the maximum angle, and therefore the minimum particle size, which can be measured is limited.

|

In contrast, in a Reverse Fourier set-up, which ISO13320 now recognizes as a standard alternative in laser diffraction instrument design, the lens is positioned before the measurement zone. This set-up restricts the path length over which measurements can be made, but allows detection of scattered light over a wider range of angles, as detectors can be positioned both in front of and behind the cell. This gives access to a broader dynamic range, without requiring lens changes, and consequently better resolution of the presence of out-of-specification particles

A further goal in optical development has been to extend capability in the sub-micron range, an area of increasing industrial interest. Hardware features that address this issue, and that can in certain circumstance extend measurement down below 0.1 microns include:

Alongside these advances in optical hardware have come order of magnitude improvements in computing power. In the last few years this has largely resolved the issue of choice of optical model for laser diffraction analysis.

The Mie theory of light scattering provides a detailed mathematical description of the correlation between the particle size distribution of a sample and the light scattering it produces, and has long been recognized as the most suitable model for laser diffraction analysis. However, during the early development of laser diffraction, the lack of computing power to apply Mie Theory encouraged adoption of the Fraunhofer Approximation.

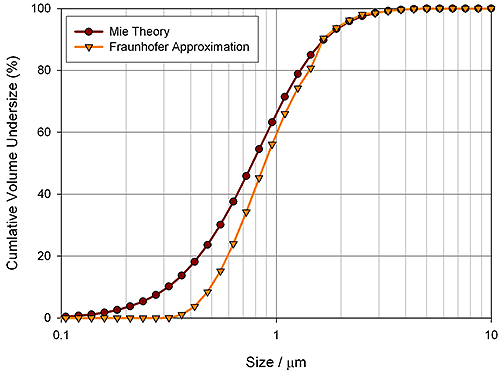

To simplify the predictions of light scattering behaviour, the Fraunhofer Approximation make a series of assumptions. The result is a simpler model that eases the computational burden associated with particle size determination, but unfortunately gives erroneous data in certain circumstances. Now that the use of Mie is possible, any decision to adopt the Fraunhofer approximation must be carefully justified, paying particular attention to: the size of particles present; whether they are transparent or absorbing; and the difference between the refractive index of the dispersant and particle [1]. The use of Mie is especially important for measurement in the sub-micron range, which is of growing interest to many industrial users. Into the future, the ease of use and applicability of Mie is likely to signal a continuing decline in the application of Fraunhofer, although one drawback of Mie, the need for refractive index data for the materials being measured, is an ongoing deterrent for some.

The pigment industry relies on particle size data to control the optical properties of its products. The figure below shows particle size data for calcium carbonate a filler used to whiten paper. For this product within a narrow particle size band there is an increase in optical scattering efficiency that delivers optimal whiteness.

|

Measured scattering data for calcium carbonate has been de-convoluted using the Fraunhofer Approximation and Mie Theory to contrast the results obtained with each. Fraunhofer measures the particle size distribution as larger than it is, an error that arises from the assumption made within the model that the scattering efficiency of a particle is independent of size. In reality, the scattering efficiency changes considerably below 1 µm, causing the Fraunhofer Approximation to incorrectly report the volume of material in this size range. In contrast, by predicting the change in scattering efficiency, Mie Theory correctly calculates the extent of the size distribution within the submicron region.

Another benefit of improvements in computing power, coupled with software capabilities, has been significant improvements in the 'ease of use' of laser diffraction measurements, through the use of measurement automation.

Full automation of an analytical technique greatly eases its transition into new markets and new application areas. The best laser diffraction systems automate measurement through the use of standard operating procedures (SOPs). Once set up these detailed, step-by-step, instructions automatically drive the analytical process from receipt of the sample through to data delivery, with negligible input from the operator. Sample loading may be the only remaining manual task.

The productivity gains of automation are readily appreciated but equally important is the enhanced data integrity it delivers. Eliminating operator-to-operator variability improves repeatability and reproducibility. Once developed, a method can be rolled out across the world with confidence, and implemented by relatively inexperienced personnel, with little training. Reliable data, better productivity and streamlined global specification transfer are valuable commodities in today's highly competitive market place.

The success of automation has also underpinned the transition of laser diffraction into the process arena. At-line systems for operator use are routine; continuous analyzers for in- and on-line measurement have long been a commercial reality. These systems are widely used for real-time monitoring and automated control across a number of manufacturing sectors. Laser diffraction is now successfully applied right across the product lifecycle, from development, through to manufacturing and QC.

The maturation of laser diffraction technology has produced instruments that, in many instances, substantially fulfil industrial requirements for particle size measurement. Alongside the advances already discussed have come practical hardware features that ease sample loading, and simplify the transition between wet and dry sample measurement. As a result, laser diffraction systems are now perceived by many as productive, flexible workhorses - a reliable part of a company's analytical armoury. However, challenges remain and the needs of industry continue to evolve.

A key issue for manufacturers of laser diffraction instrumentation is how to ensure that the performance gains made over the last decade are fully exploited by the users. Getting to the stage of push button analysis is an eminently realistic goal, but it relies on effective decision-making during method development: Is the sample representative? Should it be measured wet or dry? Has the sample been appropriately dispersed? Is the reproducibility of the method acceptable? These questions and many others still require relatively expert consideration prior to the adoption of a method for routine measurement.

Greater coverage in ISO13320 reflects the growth in understanding of method development that has occurred over the last decade and the standard is a valuable resource for those charged with the task. Disseminating expert knowledge on this topic to those who require it remains an ongoing challenge, but will help users to achieve greatest benefit from their use of the technique and maximize the return on analytical spend. Advances in this area will also help with further penetration of the technique, to markets and applications where the perceived difficulties of method of development remain a barrier to change.

The drive towards automating both analysis and control within manufacturing plants suggests that measurement in the process arena will also be an area of continued focus. Consider the example of the cement industry. Here, manufacturers have found through laboratory studies that laser diffraction more precisely reflects product performance than does conventional Blaine measurement. Furthermore, with laser diffraction, once an optimal specification has been defined it can be transferred out to the plant, via an on-line analyzer. Continuous monitoring transforms processing efficiency and facilitates real-time release of the product, a goal shared with many others, including the pharmaceutical industry. Streamlining the transition of specifications from lab to line, means that plant operation can benefit fully from lab-based research and supports ongoing efforts towards the automation of manufacturing processes.

Finally, although the focus in this article has been particle size, the parameter measured by laser diffraction, some sectors already know that the performance of their particles is a function of, size and shape. An analytical newcomer, relatively speaking, automated imaging does not yet enjoy the levels of uptake of laser diffraction, but the technology is developing rapidly. Advances in cameras and computing are cutting the time taken to gather statistically relevant shape parameters to minutes. Combining morphological imaging with chemical identification techniques, such as Raman spectroscopy, further increases the information flow.

Laser diffraction is complementary to, and complemented by, these newer techniques. Exploiting them in combination is highly efficient. For example, imaging a sample is a straightforward way of checking its state of dispersion. And in the development of specifications, if chemical imaging reveals that the uniformity of an active ingredient in a final product is dependent on its size, then into commercialisation a particle size specification alone may be adequate to prevent a problem. As newer technologies mature, ways to productively use them alongside laser diffraction will become increasingly obvious. That said, the many advantages of laser diffraction suggest that its appeal will endure well into the future, and that the technique will retain its place as the preferred choice for industrial particle size measurement.

[1] ISO13320:2009 Particle sizing analysis - laser diffraction methods